Lies, Damn Lies, and Website Statistics

This article was originally written for ArrowQuick Solutions, a technology consultancy for small businesses.

Traffic statistics are a critical part of analyzing your website’s performance, and for figuring out what needs to be changed. But as with most statistics, interpretation is key.

How do I gather statistics?

There are two ways to collect data on visitor traffic.

First, the web servers that run websites usually save traffic information and errors into log files. These logs can then be processed and compiled into reports. Your hosting provider should have a way for you to view these reports.

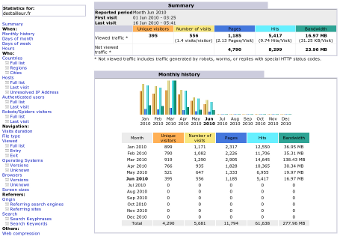

AWStats is a popular server log analyzer.

The other method is to use a third-party analyzer. After you sign up for an account with one of these services, you receive some code to add to your webpages. When someone visits a webpage, this code sends their traffic data to the service and it is recorded. You can then go to their site, log in, and view your statistics. These services often have great additional features, such as goal setting and conversion tracking, at little or no cost.

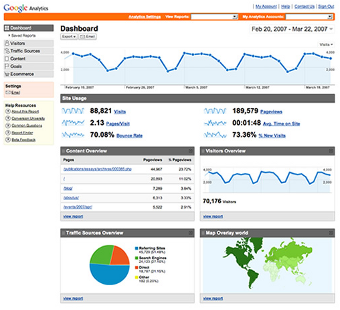

Google Analytics is a popular website analyzer.

You can use both methods together without any problems, and many site owners do. (Don’t use more than one of each, though.)

68% of statistics are wrong

After a while, you may notice something confusing: The statistics from the two sources don’t match.

The reason for this discrepancy is that the two kinds of analyzers have access to different data. This means what they are able to collect and report on will vary.

Web Servers

The logs used by your hosting provider are collected on the server. The web server can see what resource (webpage, image, document) was accessed and when. It can also make a pretty good guess about where the visitor is from, and what kind of computer they have.

The server works at a low level, so it is fast, but it is not as accurate when it comes to some visitor information. Unlike their counterparts, server-side analyzers can also record website errors, such as missing pages and broken links, and collect data on non-webpage traffic (images, documents, and multimedia).

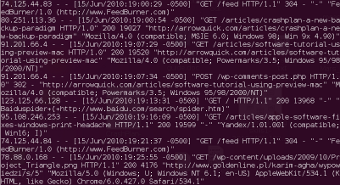

Server logs are stored as simple lines of text.

Third-party Services

Third-party analyzers like Google Analytics operate at the client level — that is, within the user’s browser, on the computer. Because they operate at a higher level, these services can see more information about the user’s setup, like the browser configuration and its installed plugins (Flash, Java, etc.).

The disadvantage of client-side services is that they are inherently slower than server analyzers. Every time someone visits your site, they need to download and run the code, which then sends the stats to the service over the internet. This can make the webpage appear slower to your visitors. And although 95%+ of computers have the necessary technology to run the code, there is still the small audience of people who turn it off, or use older or unsupported devices.

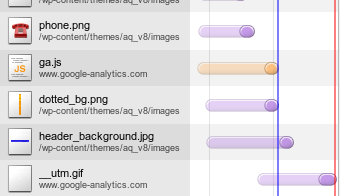

Google Analytics added about 240 milliseconds to this page's loading time -- 17% of the total.

What is a unique visitor?

In addition to the variation in the data itself, there are differences in how statistics software interprets the data.

Data points like pageviews and unique visitors are remarkably fuzzy. There is no strict definition of what a unique visitor is.

This is because the web is stateless. I’ll spare you the technical explanation, but basically this means that the software has to guess whether a visitor that views one webpage is the same visitor that views another webpage. And what if you visit a website, then leave and come back to that website 15 minutes later — are you the same visitor, or a new visitor? Much of the difference between analyzers is because of their varying interpretations.

So where does this leave me?

The bottom line is that website statistics are not 100% accurate.

You should still utilize these services, but remember to take the numbers with a grain of salt. Don’t look at absolutes — instead, look at the relative change over time. Has traffic to your site increased? Are there more new visitors than before? Are visitors staying longer?

It is still useful to have both server- and client-side analyzers. Some data is simply unavailable from one method or the other, so you’ll want to make sure everything is covered. Use a client-side service like Google Analytics as your primary source, and look at your host’s reports regularly for information about errors and non-webpage files.

|